Featured Articles

Popular Articles

-

Dremio Blog: Open Data Insights

What’s New in Apache Iceberg 1.11.0

-

Dremio Blog: Open Data Insights

What is a model context protocol (MCP) server?

-

Dremio Blog: Various Insights

Snowflake Competitors: More Affordable and Open Source Alternatives

-

Dremio Blog: Open Data Insights

Agentic Analytics vs Traditional BI Tools: What Do You Need for the Future?

Browse All Blog Articles

-

Dremio Blog: Various Insights

Enterprise Data Fabric: Architecture and Best Practices

Learn how enterprise data fabric supports AI, analytics and governed data access across modern hybrid and multi-cloud environments. -

Dremio Blog: Various Insights

Enterprise Data Platforms: The Definitive Guide

Explore enterprise data platform architecture, use cases and selection criteria. See how Dremio supports analytics and AI at scale. -

Dremio Blog: Open Data Insights

What Are Table Formats and Why Were They Needed?

A table format is a specification that defines how to organize metadata about data files so that query engines can treat them as reliable, transactional tables. It sits between the query engine and the physical files. -

Dremio Blog: Various Insights

Iceberg Default Column Values: Schema Evolution Without the Backfill

Adding a column to a large production table used to require a plan. You'd write the migration script, schedule a maintenance window, kick off a backfill job that rewrote every data file to include the new column, and then wait. For a table with billions of rows on a busy lake, that wait could stretch […] -

Dremio Blog: Various Insights

The AI Data Gap Is Closing. Not for Everyone.

Something I keep hearing in conversations with customers and prospects right now is a version of the same frustration: we have executive buy-in, budget approved, models selected, and we still cannot ship anything meaningful. The AI initiative is stalled, and everyone is looking at the data team. Then there are the teams who are already […] -

Dremio Blog: Various Insights

What Data Leaders Get Wrong About Agentic AI

Your organisation has probably already had the "AI agents" conversation. Maybe it was at a board meeting, maybe it surfaced during quarterly planning, or maybe a team came to you with a proposal and a timeline. Either way, the conversation almost certainly centred on the AI: which model, which vendor, which use case. Very few […] -

Dremio Blog: Various Insights

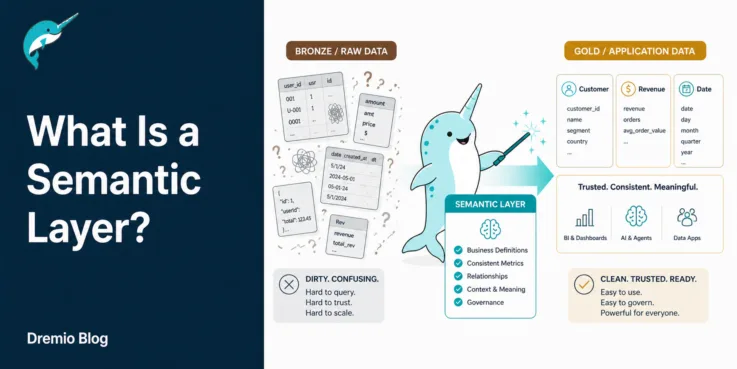

What Is a Semantic Layer?

This guide explores what semantic layers are, their benefits and how they’re implemented within your enterprise data stack. -

Product Insights from the Dremio Blog

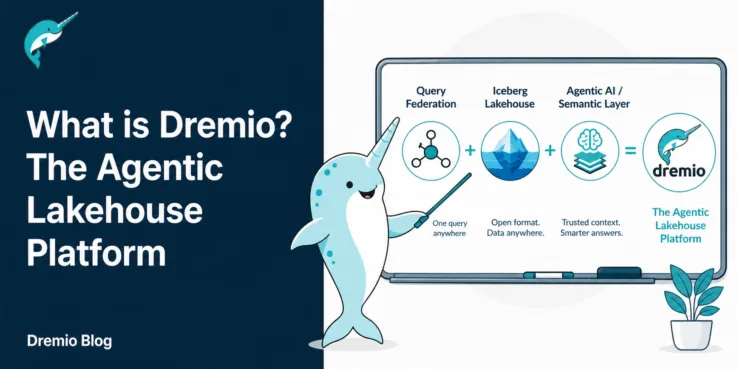

What is Dremio? The Unified Lakehouse and AI Platform

Dremio is not a traditional data warehouse. It is a unified platform that eliminates data silos through a federated query engine, secures your object storage with an Iceberg-based lakehouse, and accelerates insights with an Agentic AI layer. -

Dremio Blog: Various Insights

Why Retail Analytics Backlogs Are Costing Your Business Real Margin

Retail analytics decisions: markdown timing, campaign activation, inventory replenishment. They are supposed to be data-driven. But at most retailers, the data isn't ready when the decision needs to be made. Your merchandising team sends a request to data engineering on Monday. The report comes back Thursday. By then, the sell-through window has shifted, the promotion […] -

Dremio Blog: Various Insights

19 Databricks Alternatives and Competitors

Compare 19 Databricks alternatives for data analytics, AI and lakehouse workloads, built to accelerate SQL and simplify operations. -

Dremio Blog: Various Insights

Snowflake Competitors: More Affordable and Open Source Alternatives

The main differences come down to architecture, cost and AI readiness. Snowflake requires copying data into its warehouse and charges per compute credit consumed. Dremio queries data in place with Zero-ETL federation and includes an AI semantic layer, a built-in AI agent,and autonomous optimization. For a full breakdown, see the Dremio vs Snowflake comparison. -

Dremio Blog: Various Insights

SAP Intends to Acquire Dremio

Accelerating the Agentic Lakehouse Today, we’re thrilled to announce that Dremio has agreed to join forces with SAP, pending regulatory approval. Together, we will be able to deliver one open platform where agents reason over all enterprise data, decide, and act. This acquisition will give us the scale and backing to accelerate our agentic vision, […] -

Dremio Blog: Open Data Insights

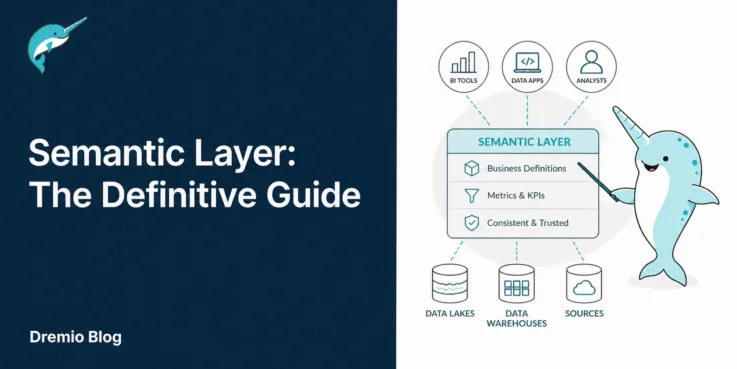

Semantic Layer: The Definitive Guide

The semantic layer is not a one-time project. It is a living system that grows with your organization's data needs. Start small, prove value on the metrics that matter most, and expand from there. -

Dremio Blog: Partnerships Unveiled

Query Dremio-governed Iceberg tables directly from Microsoft Fabric (Preview)

Microsoft Fabric now includes the Mirrored Dremio catalog, a new item type that brings Dremio-managed Iceberg tables into OneLake without copying data or building pipelines. If your organization runs Dremio as its lakehouse platform, your Fabric users can now query that data from Power BI, the SQL analytics endpoint, and other Fabric experiences, while the […] -

Dremio Blog: Various Insights

Iceberg Deletion Vectors: The Better Way to Delete Rows

For all the many improvements data lakehouses bring to analytics, there's one uncomfortable trade-off: deleting rows is expensive. In a system built around immutable Parquet files, a delete is actually a rewrite. You read the file, filter out the rows you don't want, and write a new file. At scale those I/O costs mount up […]

- « Previous Page

- 1

- 2

- 3

- 4

- …

- 43

- Next Page »