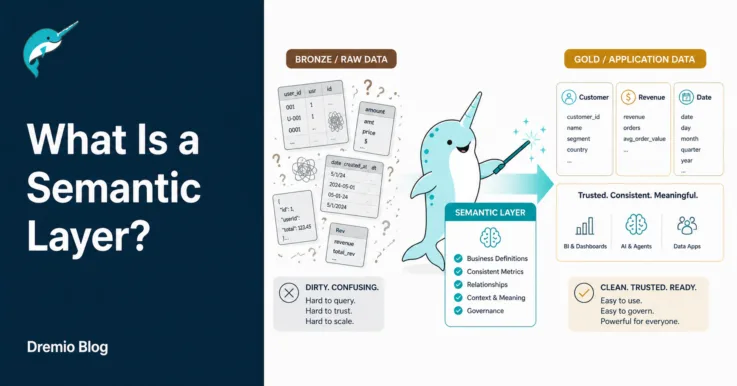

What Is a Semantic Layer?

The semantic layer is a business representation of corporate data for end users. In most data architectures, it sits between your data store (like a data warehouse and a data lake) and consumption tools for your end users. By representing data in a business-friendly format, data analysts can create meaningful dashboards and derive actionable insights […]