Agentic Interface

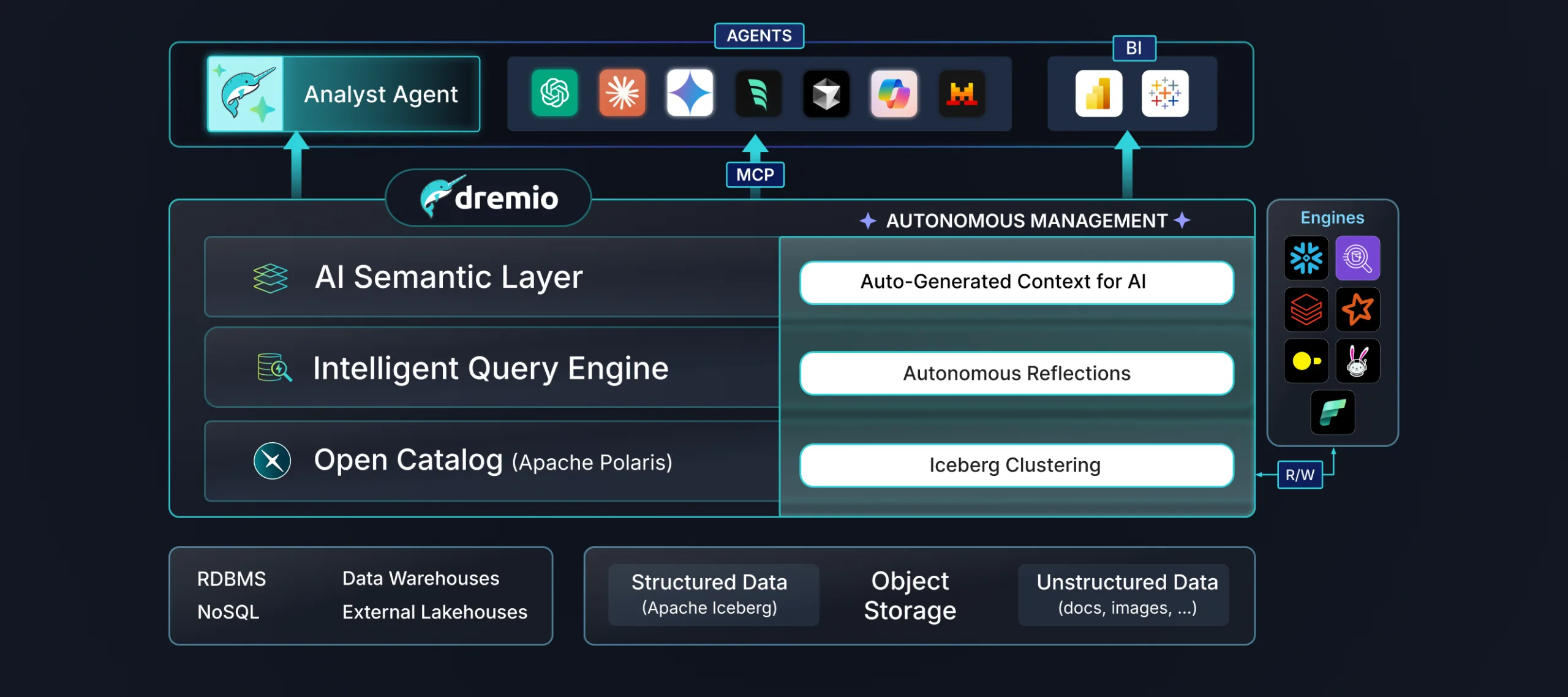

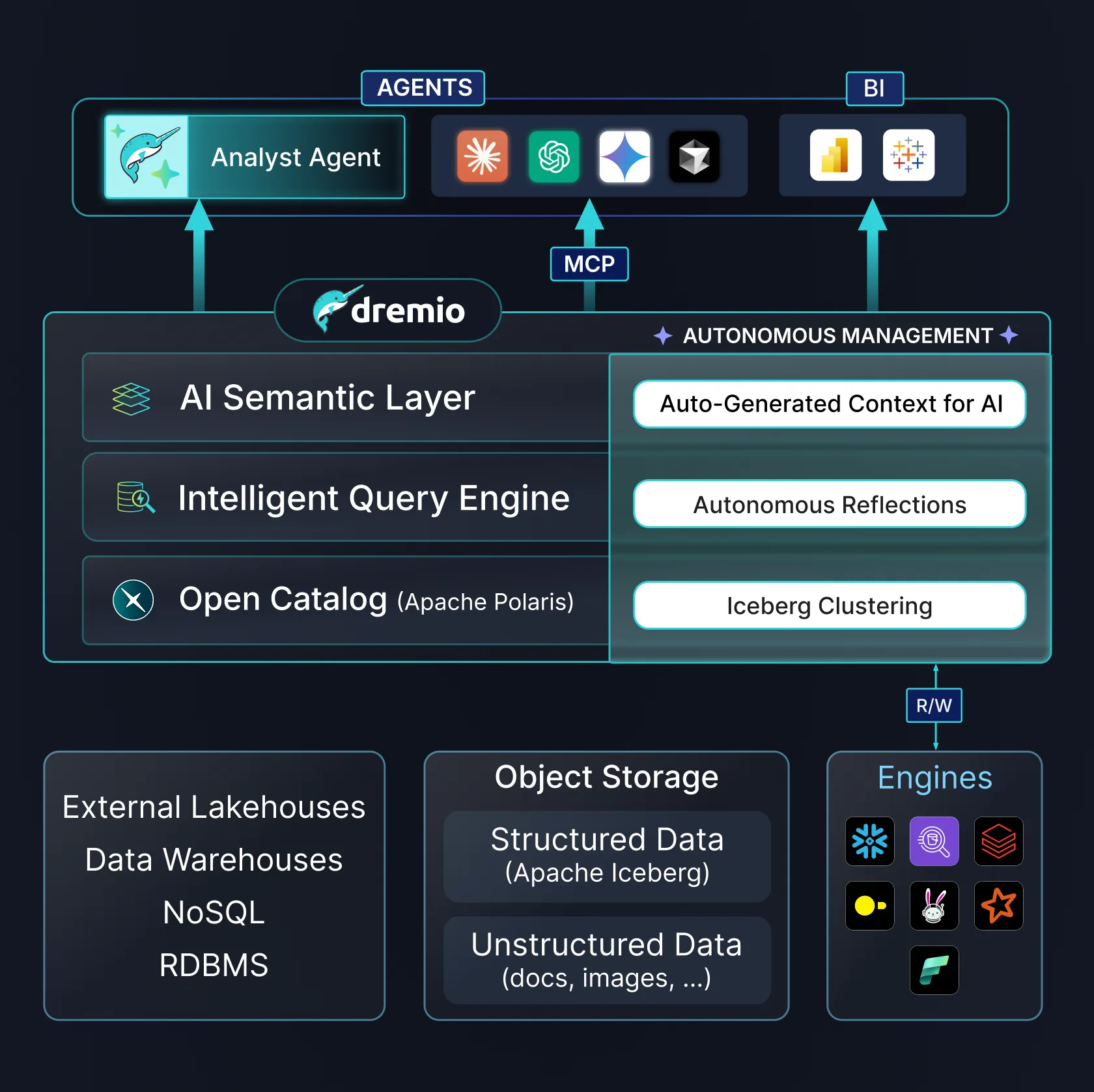

Fastest Path to Agentic Analytics

Next-Gen Analytics

The data platform that delivers the fastest path to trusted AI through unified data, required context, and end-to-end governance all at the lowest cost.

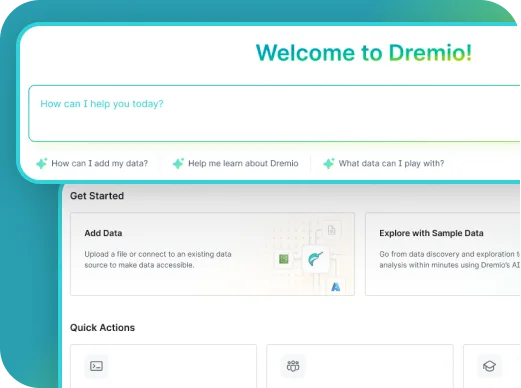

Dremio’s integrated AI Agent allows users to ask questions in natural-language and easily get answers. The AI Agent dynamically generates SQL, validates results, and delivers insights alongside visualizations.

Fastest Path to Agentic Analytics

Context that Makes Data Understandable

High-Performance Analytics on All Your Data

Unified Metadata and Governance

Performance without Manual Tuning

PRODUCTS

Choose the deployment that best fits your operational model.

Free Query Engine. Deploy self-managed on-premises or in your preferred cloud environment.

Try Dremio With Docker

Run this command in your terminal with Docker.

Dremio will be accessible in your web browser at localhost:9047

GET STARTED

See Dremio in Action

Explore this interactive demo and see how Dremio’s Agentic Lakehouse powers AI and BI workloads.

CASE STUDIES

1000s of companies across all industries trust Dremio.

TRUSTED

Dremio is built to securely protect your data:

LEADERS IN OPEN SOURCE

Driving innovation in the open-source community with contributions to Arrow, Iceberg, and Polaris.

Co-created by Dremio, Apache Arrow is the in-memory and transport format that powers high-performance lakehouse analytics.

As a key contributor, Dremio helps drive Apache Iceberg, the open table format for reliable and scalable lakehouse data.

Apache Polaris, co-created by Dremio, is an open metadata catalog designed for modern lakehouse architectures.

LEARN MORE