Dremio is excited to announce the contribution of the Arrow Flight JDBC driver to the Apache Arrow community!

Apache Arrow and Dremio have a rich history together. Let’s take a look into the major milestones over the six-year history of Apache Arrow.

2016 – Dremio Co-Creates Apache Arrow

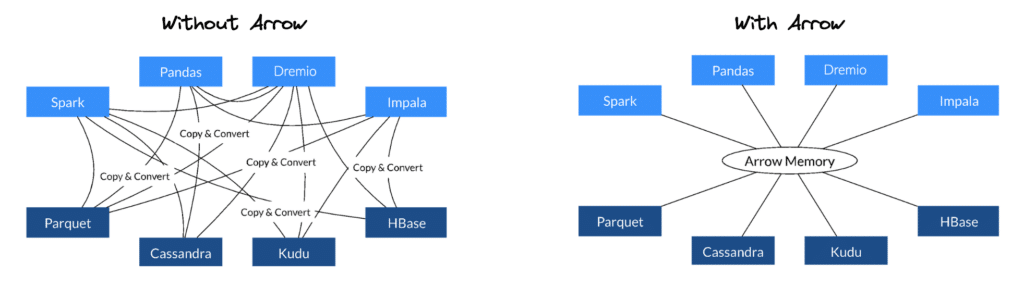

The video linked above gives a great overview of the thoughts and origin behind the Arrow project. In short, there was a looming challenge in data engineering and analytics: having different representations of data across each system/tool in the mix. This caused a lot of overhead for developers and bottlenecks in system architectures that could be addressed if all of the systems could operate on a common data format. Some of the initial implementation arose out of Dremio’s internal data format.

The goal was to create a way to communicate data between systems where it did not need to be converted at every step of the way. This was a two-part problem:

- A solution was needed for each system to represent data internally, so each system could represent the data in the same way.

- A protocol was needed to transmit the data when it was in a common format.

June 2018 – Creation of Gandiva

Following the creation of a common open memory format, Arrow adoption and development started to really take off. However, at this point, developers were each creating their own implementations of operations against the Arrow data structures in memory. It became clear there was a great opportunity to once again maximize the efficiency for everyone by open sourcing a component that was created and leveraged in Dremio. This is why we created Gandiva - an execution kernel for Arrow that provides significant performance improvements for low-level operations on Arrow buffers.

Further, we decided to generalize Gandiva so it could be used with any execution tier and contribute it to the Apache Arrow project.

It is provided as a standalone C++ library for efficient evaluation of arbitrary SQL expressions on Arrow buffers using runtime code generation in LLVM. While we use Gandiva in Dremio, we worked very hard to make sure Gandiva is an independent kernel that can be used by any analytics system. As such, it has no runtime or compile time dependencies on Dremio or any other execution engine. It provides Java APIs that use the JNI bridge underneath to talk to C++ code for code generation and expression evaluation.

September 2018 – First Commit for Arrow Flight

The implementation details for what ultimately became Arrow Flight came out of the Dremio project back in 2017. The first code surfaced up to the Arrow code base in this commit to kick off the evolution of Arrow from just an in-memory representation to now being able to transmit those Arrow buffers directly across gRPC.

This enabled applications (clients and servers) to gain some massive throughput advantages. One major overhead that was eliminated was the need to serialize and deserialize just to transmit the Arrow buffers across the wire. That step is no longer necessary. Flight libraries also lay the foundation for supporting parallel connections across nodes between a Flight client and Flight server.

December 2021 – Arrow Flight SQL for C++ Gets Merged

Arrow and Arrow Flight successfully defined a modern standard for data to be represented in memory and on the wire respectively. However, there were a few immediate additions necessary to maximize the utility of these protocols for our existing tools and development workflows.

When interacting with data systems that want to transmit data via Arrow Flight, the client needs ways to do things such as:

- Execute SQL queries.

- Retrieve catalog metadata (e.g., a database’s tables, a table’s columns).

- Prepare and execute prepared statements.

Arrow Flight SQL is the initiative in the Arrow project that Dremio helped drive to get those tasks accomplished in a standard way. It’s a client-server protocol for interacting with SQL databases built on Arrow Flight.

Arrow Flight SQL provides the standardization of interaction with databases for clients, as well as the performance benefits of Arrow and Arrow Flight.

Further, Arrow Flight SQL is language and database-agnostic, meaning a single set of client libraries to connect to any server providing Flight SQL endpoints. This prevents the need for clients to worry about installing or maintaining a client-side driver for each database.

May 2022 – Dremio Helps Technically Review the First Book Dedicated to Apache Arrow

Matt Topol is prominent in the Apache Arrow and Go communities and speaks often at the Subsurface LIVE Conference. With the exposure he got from speaking at Subsurface events, Matt was offered the opportunity to write the first Apache Arrow book, In-Memory Analytics with Apache Arrow, published by Packt. Dremio’s Jason Hughes served as a technical reviewer for the book.

Matt and Jason would then go on to present the following talks on Arrow at a Subsurface Meetup event in September 2022.

- “Understanding Apache Arrow” by Voltron Data’s Matt Topol

- Apache Arrow Flight SQL: A Universal Standard for High-Performance Data Transfers from Databases by Dremio's Jason Hughes

November 2022 – Dremio Contributes the Arrow Flight JDBC Driver to the Arrow Community

Now we have the memory representation of data defined, the wire representation defined, and an easy-to-use standard interface for data systems … all done, right? Well, not quite. Many users use clients like DBeaver and Tableau as well as custom applications which connect to query engines and data warehouses using a JDBC interface.

As a result, it will take all of these clients time to adopt the Arrow Flight SQL native libraries. In the meantime, however, users of these tools want to leverage the benefits of Arrow Flight and Arrow Flight SQL.

This is where the Arrow Flight SQL JDBC driver comes in. This driver has two key benefits:

- Existing BI/SQL clients that rely on JDBC are able to leverage the performance benefits of Arrow Flight over-the-wire without having to change anything in how they interact with sources.

- These clients are able to use this single JDBC driver for every service/database exposing Arrow Flight SQL.

While the two benefits above are useful, the Arrow Flight SQL JDBC isn’t a silver bullet for every client tool — if your client tool uses a columnar representation of data internally and is able to leverage the native Arrow Flight SQL libraries, it should do so! You can hear more about the trade-offs and considerations at the 22:24 mark of this presentation on Arrow Flight SQL.

Conclusion

Dremio and Arrow have a long and fruitful history together — and this is only the beginning! There is a lot of cool, useful work happening both in the Arrow ecosystem and in Dremio that will continue to be contributed to the Arrow community.