36 minute read · August 15, 2017

How to Edit Virtual Data Sets with the Dremio Semantic Layer

· Dremio Team

Intro

In this tutorial we’ll work with data provided by Yelp to explore the powerful data preparation features of Dremio. Unlike ETL or Data Prep tools, Dremio does not make copies of the data. Instead, users create virtual datasets, and all data transformation is performed on the fly as it is being accessed through Dremio’s Apache Arrow-based execution engine.

The raw data in this tutorial is provided as JSON, including a number of rich fields such as arrays and nested documents. We will work with this data “as is,” stored on the file system. Most of the tutorial applies if you happen to have this data in Elasticsearch or MongoDB.

Assumptions

To follow this tutorial you should have access to Dremio. If you haven’t done so already, deploying Dremio is easy - see the Quickstart for instructions.

We also think it will be easier if you’ve read Getting Oriented to Dremio and Working With Your First Dataset. If you have questions along the way, don’t hesitate to ask on the Dremio Community Site.

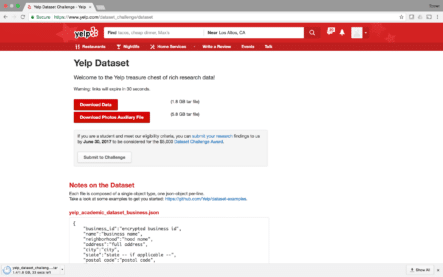

Download the Sample Data

To start, let’s download the raw data

You should see five files:

- yelp_academic_dataset_business.json

- yelp_academic_dataset_checkin.json

- yelp_academic_dataset_review.json

- yelp_academic_dataset_tip.json

- yelp_academic_dataset_user.json

The data inside these files is stored in JSON Lines format, also known as newline-delimited JSON, where each line contains a JSON document. For example, the first line in the business dataset is:

$ head -n 1 yelp_academic_dataset_business.json

{"business_id": "vcNAWiLM4dR7D2nwwJ7nCA", "full_address": "4840 E Indian School Rd\nSte 101\nPhoenix, AZ 85018", "hours": {"Tuesday": {"close": "17:00", "open": "08:00"}, "Friday": {"close": "17:00", "open": "08:00"}, "Monday": {"close": "17:00", "open": "08:00"}, "Wednesday": {"close": "17:00", "open": "08:00"}, "Thursday": {"close": "17:00", "open": "08:00"}}, "open": true, "categories": ["Doctors", "Health & Medical"], "city": "Phoenix", "review_count": 7, "name": "Eric Goldberg, MD", "neighborhoods": [], "longitude": -111.98375799999999, "state": "AZ", "stars": 3.5, "latitude": 33.499313000000001, "attributes": {"By Appointment Only": true}, "type": "business"}Use jq as an easy way to pretty print this one-line JSON document:

$ head -n 1 yelp_academic_dataset_business.json | jq .

{

"business_id": "vcNAWiLM4dR7D2nwwJ7nCA",

"full_address": "4840 E Indian School Rd\nSte 101\nPhoenix, AZ 85018",

"hours": {

"Tuesday": {

"close": "17:00",

"open": "08:00"

},

"Friday": {

"close": "17:00",

"open": "08:00"

},

"Monday": {

"close": "17:00",

"open": "08:00"

},

"Wednesday": {

"close": "17:00",

"open": "08:00"

},

"Thursday": {

"close": "17:00",

"open": "08:00"

}

},

"open": true,

"categories": [

"Doctors",

"Health & Medical"

],

"city": "Phoenix",

"review_count": 7,

"name": "Eric Goldberg, MD",

"neighborhoods": [],

"longitude": -111.983758,

"state": "AZ",

"stars": 3.5,

"latitude": 33.499313,

"attributes": {

"By Appointment Only": true

},

"type": "business"

}Connect to the Data Source

If you’re running a multi-node Dremio cluster, you’ll want to place the JSON files on a NAS (identically mounted on all Dremio nodes), Hadoop cluster or Amazon S3. If you’re running a single-node Dremio cluster, you can place the files on the node’s local file system.

Click on the New Source button and add a “NAS” source. The NAS source supports network-attached storage, in the case of a multi-node Dremio cluster, as well as direct-attached storage (i.e., the local file system) in the case of a single-node Dremio cluster.

Name the new source “public,” and enter the desired file system path. In our example the Yelp JSON files are at /Users/jdoe/dev/data/yelp_dataset_challenge, so let’s add the path /Users/jdoe/dev/data.

Click the Save button, and you’ll see the main screen with the new data source.

Converting Files to Datasets

Notice the folder yelp_dataset_challenge in the new source. Dremio makes all your data look like it’s part of a single relational database, with a hierarchical namespace. You can click on the folder name to see the actual files in it.

Dremio allows you to query self-describing files, such as newline-delimited JSON, by referring to them in a SQL statement. Hover over one of the files, and click the little clipboard icon:

This icon copies the canonical dataset path to the clipboard so that you can paste it into a query. Now click on the New Query button at the top and enter this query:

SELECT * FROM "public"."yelp_dataset_challenge"."yelp_academic_dataset_business.json"

Notice that the file is now treated as a physical dataset (purple dataset icon):

This means that if you connect an external tool like Power BI, Tableau or Qlik Sense to Dremio, you’ll see this dataset in the namespace.

Another way to convert a file into a physical dataset is by clicking on the conversion button on the right-hand side.

Let’s click the conversion button on the yelp_academic_dataset_checkin.json file. You should see the Dataset Settings dialog which allows you to specify the format of the file. In this case we have a newline-delimited JSON file, so there are no settings, but in the case of a CSV file, there are various settings that you can control, including the line and field delimiters.

Once you click Save, Dremio will automatically open up the dataset viewer on the new dataset.

Getting Started

Dremio enables you to edit virtual datasets by interacting with the dataset in a visual manner, resulting in a new virtual dataset. Note that Dremio does not create a copy of the data, so there is no overhead to creating a new virtual dataset.

Let’s get started by working with the business dataset, which you’ll find by browsing to public.yelp_dataset_challenge or using the search box at the top (enter “business” in the search box).

In this dataset, the column named type contains the same value for all records, so let’s drop it. Click on the small arrow at the right-hand side of the column header to open the transformation menu, and select Drop.

As you do that, you’ll notice a couple changes on the screen (in addition to the column disappearing).

First, we’ve gone from a physical dataset, with a clear name and location, to a new dataset with the generic name “New Query”. Dremio never modifies your physical datasets (files, Oracle tables, Elasticsearch indexes/types, MongoDB collections, etc.). Any time you start your data preparation journey from a physical dataset, the first change will automatically initiate a new, unsaved virtual dataset called New Query.

Second, the number of fields is now 15 instead of 16, which you’ll see in the Fields hyperlink at the top right.

Third, there’s a new dot in the dataset history bar. You can hover on it to see what the transformation was.

Filtering Data

Dremio makes it easy to filter the records in a dataset based on the values of a column. The actual experience depends on the data type. You can filter by selecting Keep Only or Exclude from the transformation menu, or by selecting a value in one of the cells.

Filtering by Pattern

Let’s keep only the records corresponding to businesses in the state of Wisconsin. To do that, select the text WI in one of the cells of the state column and choose the Keep Only option.

At the top of the screen you’ll see that we are in the Keep Only action, using the Pattern method, which utilizes a pattern (or regular expression) to match the desired records.

Dremio automatically recommends a few possible patterns to use. Each pattern is represented by a two-sided card.

In this case, you can see that the first card represents the pattern “Contains WI”, meaning that values containing the two characters “WI” will match. The bar at the bottom of the card indicates that 947 records contain “WI”, and 9053 records do not. Note that Dremio is testing a sample of the records, rather than all records in the dataset.

You can also click on the small pencil icon to flip the card and edit the selected pattern.

Filtering by Custom Condition

In some cases you may want to filter the records via a more complex condition. In these cases, you can utilize the power of custom conditions. Let’s click on Custom Condition to see the expression editor. Here you can utilize hundreds of SQL functions, shown in the function selector on the right, to craft an expression that matches the desired records.

For example, if you wanted to keep only the businesses with short names, you could specify the expression LENGTH(“name”) < 10 (we use quotes here just because name is a reserved word).

Filtering by Values

Let’s change the filtering method to Values by clicking on the word Values.

Here you can see a histogram showing the frequency of different values in the data. 9.5% of the businesses are in WI, while 73% of the businesses are in AZ. The percentages are based on a sample of the data (10,000 records in this case). Note that for numeric and date types, we utilize a continuous histogram (with sliding limits) rather than a discrete histogram.

Click on the Apply button to proceed with this filter, and you’ll see that we now only have Wisconsin based businesses in our virtual dataset.

Extracting Text

Dremio provides numerous capabilities to manipulate text. The easiest way to do so is by interacting with the text in one of the cells. For example, let’s extract the zip code from the address column. The addresses are quite long, so you may not be able to see the zip code unless you make the column a bit wider. Alternatively, when you hover on a cell with a long address, you’ll see a small three-dot icon, and you can click on that icon to see the entire value in a tooltip.

Go ahead and select the zip code in this address, and select the Extract option.

Dremio recommends patterns (or regular expressions), represented by cards, that match the zip code in this address. Each card also shows a few sample values, with the pattern highlighted in each one.

You’ll want to choose a pattern in which the desired substring (i.e., the zip code) is highlighted. For example, the following card represents an incorrect pattern, which works for the address we originally selected but fails to grab the zip code in at least one of the samples on the card.

In the main data grid, you’ll see a much larger preview of the currently selected pattern:

From this sample it seems that the currently selected card is doing the job. Notice the options just below the cards. You can choose the name of the new column, and you can drop the source column. You can, of course replace the values by keeping the same column name and checking the Drop Source Field checkbox. For this tutorial, let’s name the new column zip and keep the address column.

Note that you can also rename a column in the main data grid by clicking on the column name and editing in place.

Note that we can also utilize the power of regular expressions to extract text. For example, let’s extract the first line of the address (i.e., the street). To do so, we could specify a regular expression that captures the first line.

Replacing a Single Value (Text)

Dremio makes it easy to replace a specific value. For example, to replace the value Charter Communications, with the value Charter, simply select the entire value and click Replace:

Choose the card that says “Exactly matches Charter Communications” and enter the replacement value below.

Replacing a Single Value (Numeric or Date)

When you’re dealing with non-text columns, replacing a value is even easier. You’ll notice the Exact method at the top.

Replacing Distinct Values with a Single Value (Text)

Dremio supports two ways to replace multiple text values with a single value:

- Value-based replacement

- Pattern-based replacement

With value-based replacement, you can select the distinct values that you want to replace and enter the replacement value.

With pattern-based replacement, you can provide a pattern or regular expression and enter the replacement value. All values that match that pattern are replaced with the replacement value.

Replacing Distinct Values with a Single Value (Numeric or Date)

Dremio makes it easy to replace multiple values at once. For example, let’s say we’re worried about fake reviews on Yelp skewing our analysis, and we want to limit the review_count to 20 on all businesses. Open the transformation menu, and click on the Replace option.

The Range method allows you to replace a range of values with a single value. In this case, use the lower limit slider, or simply enter the value 20 as the lower limit, so that we can select all values greater than or equal to 20 (and then replace them with 20).

Data Cleansing

In some cases, you may want to replace various different values. For example, you may have messy data in which the same customer name is spelled in several different ways. Dremio allows you to do this, utilizing the all-time favorite Microsoft Excel to create the mapping!

Let’s start by downloading all the unique values in a column into a spreadsheet. For this tutorial, we’ll use the city column. From the transformation menu on the city column, select Group by, and don’t add any measures.

Click Apply to see all the distinct cities in the dataset.

Open the data transformation menu and select Sort Ascending.

From the Download menu at the top, select CSV.

Click on the downloaded CSV to open Excel (or your favorite text editor).

Inside Excel, duplicate the column and change the first value (B1, the column name) in the new column to new_city. Notice that some of the cities (e.g., De Forest, DeForest, Deforest) in the dataset have multiple different spellings. Let’s fix that by entering the correct/consistent value in the new_city column.

Save the spreadsheet. Open the Dremio UI in a new browser tab, and bring up your home space, which can be accessed via the link next to the house icon. Every user in Dremio has a home space, where they can upload spreadsheets and create private virtual datasets.

Click the button at the top-right corner to upload the spreadsheet you just edited into the home space.

Let’s call the spreadsheet-based dataset “city mapping”.

Check the Extract Field Headers checkbox to make sure that the column names aren’t treated as values.

Click Save, and you’ll see that the spreadsheet is now reflected as a physical dataset in your home space.

Let’s move back to the other browser tab, and roll back our work-in-progress dataset to the state prior to aggregating on the city column. Click the version history dots on the right-hand side until you get to the right version.

Click the Join button and select the spreadsheet.

Click Next, and then drag the city column from both tables into the join condition editor. Click the Preview button to see a preview of the joined dataset.

Notice that the dataset now has a new column, called new_city. This column has the business’ canonicalized (i.e., correct) city name. Click Apply to apply the join transformation, and then drop the city and city0 columns.

You can also rename new_city to city, leaving us with a brand new, clean version of our city column.

Saving a Dataset in a Space

It’s time to save the virtual dataset we’ve created. Virtual datasets live in spaces. Each user has their own home space, but you can also create new spaces that can be shared with other users (or groups of users). To create a space, click the New Space button and choose a name. For this tutorial, we’ll call our space “wisconsin”, since we’re dealing with local businesses in Wisconsin.

Once you’ve created a space, you can proceed to save the dataset in that space. Click the Save As button at the top of the dataset viewer.

Once you click Save, notice the name of the dataset change from New Query to wisconsin.businesses.

That little clipboard, which shows up when you hover on the dataset name, makes it easy to copy the full dataset path into the clipboard so that you can, for example, paste it into a SQL query.

Explicit Data Type Conversion

The data type of a column is indicated by a small icon at the top of each column. You can easily convert from one type to another by clicking on the icon and selecting the desired type. For example, to convert the stars column from a float to an integer, select the Integer option.

Depending on the actual conversion, the system may ask you for more information on how to make the conversion. For example, in this case we are converting from float to integer, and there are several ways to do so (round down, up, or to the nearest integer).

Cleaning up Mixed Types

Many data sources, such as files and MongoDB collections, don’t enforce rigid schemas. With these systems, you may find yourself in a situation where a single column contains mixed types. In other words, there are values of different types in the same column. This is certainly not a desirable state, because many SQL functions and BI tools can’t deal with mixed types. Fortunately, Dremio provides built-in capabilities for cleaning up mixed types.

The orange type indicator A# indicates that this is a mixed type column.

Click on the data type icon to open the Clean Data wizard which guides you through the process of cleaning up the column. You’ll have the option of converting the column into a single type, or converting it into multiple columns, one for each type.

Handling Complex Data

Dremio supports the ability to deal with complex (i.e., nested) data, including both arrays and maps.

Extracting a Map Element

Let’s see when businesses typically open at the beginning of the week. You’ll notice that the hours column is a map, based on the data type icon.

Hover over one of the cells in the hours column, and open the tooltip by clicking on the three-dot icon. Then choose the open field under Monday.

The card will automatically show the path you just selected. Click Apply to accept the selection.

We now have a new top-level column called Monday.open which we can utilize in further exploration or curation in Dremio, or in an analysis using an external BI tool.

If you’re curious to quickly see what the most common opening time on Monday is, select the Group by option in the transformation menu.

Here you can quickly aggregate on the Monday.open column and count the number of occurrences of each distinct value.

You can choose the Sort Descending action to sort by count.

Unnesting Arrays

When data is encoded in arrays, it can be challenging to perform the desired analysis with SQL or BI tools. Dremio enables you to unnest an array-type column, resulting in one record per array element. An example will make this easy to understand.

In our new virtual dataset of Wisconsin-based businesses, each business can have multiple categories. You’ll notice, based on the data type icon, that the categories column is an array. To unnest this column, select the Unnest option in the data transformation menu.

What you’ll notice is that each of the categories is now in its own record. For businesses with more than one category, the other fields are duplicated, just as we would observe performing a join between two tables.

With the categories now unnested, we have the ability to perform a variety of standard data exploration and analysis tasks. For example, we can count the number of businesses in the Nightlife category using the Group by action or an external BI tool.

Summary

In this tutorial we walked through a simple data preparation scenario. Dremio makes it easy to work with datasets and create new virtual datasets with a variety of point-and-click actions. If there’s something you can’t do with a visual interaction, you can always utilize SQL to update the definition of a dataset.

To learn more about Dremio, visit our tutorials and resources as well as Dremio University, our free online learning platform, where you can deploy your own Dremio virtual lab. If you have any questions, visit our community forums, where we are all eager to help.

Try Dremio’s Interactive Demo

Explore this interactive demo and see how Dremio's Intelligent Lakehouse enables Agentic AI