Nutanix fast tracked data provision to key business verticals by building Data-as-a-Service platform with Dremio

-

45 million+Milli-seconds latency on queries

-

UNIFIED ACCESSto data through a single integrated platform

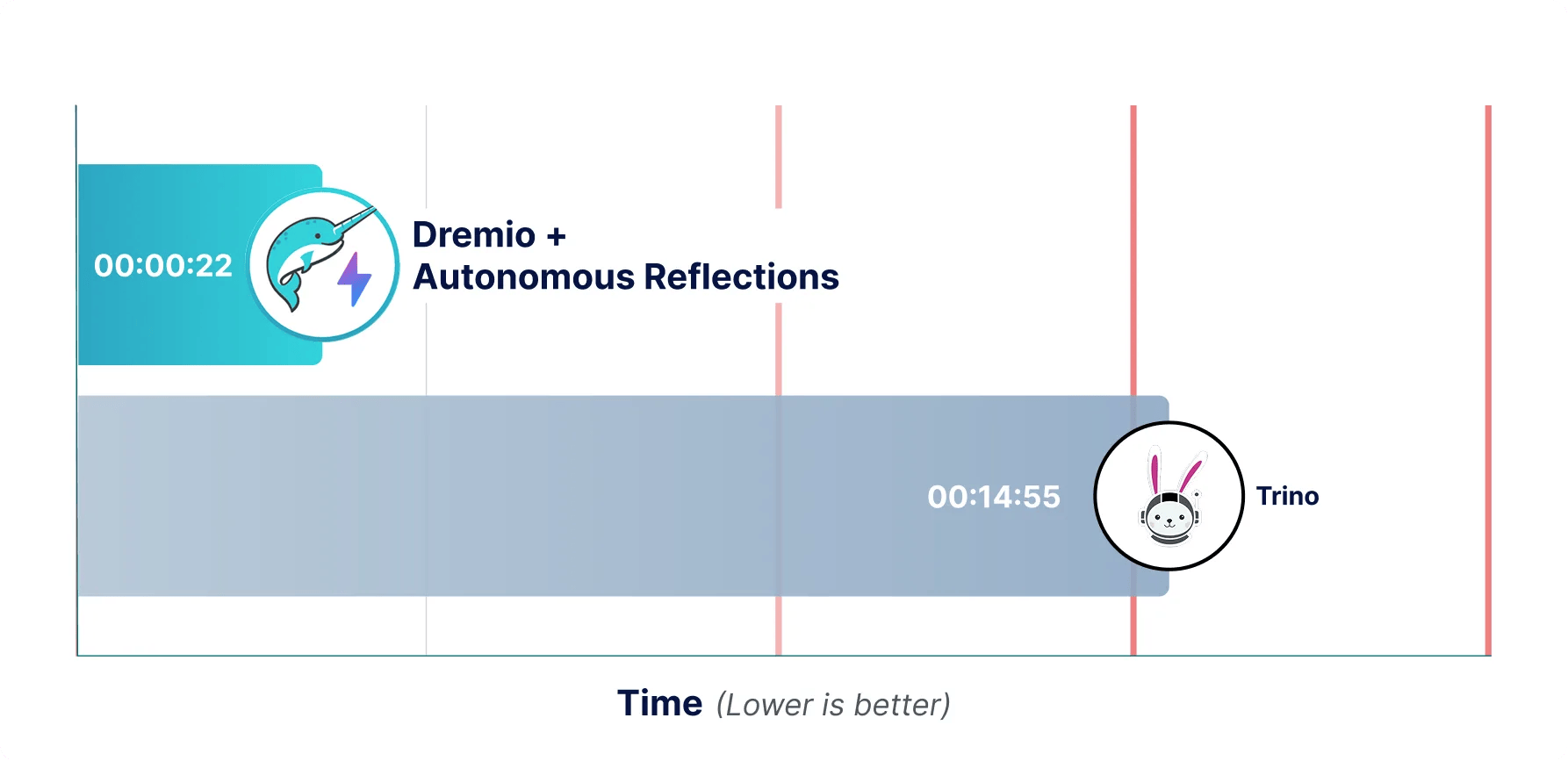

Dremio’s Agentic Lakehouse is purpose-built for enterprise manageability, scale and performance, accelerating analytics and agentic AI to help teams discover, understand and operationalize trusted data faster than Trino’s SQL-engine-only approach allows.

When comparing Dremio vs Trino, the differences go beyond query execution. Dremio delivers a complete, enterprise-grade Agentic Lakehouse with Autonomous Reflections, built-in governance and a cross-source AI Semantic Layer, while Trino remains a distributed SQL engine that requires significant surrounding infrastructure to match the same capabilities.

Capability

Dremio

Trino

Query performance

Apache Arrow-native engine with Autonomous Reflections: auto-created, auto-refreshed, auto-retired accelerators

Distributed SQL execution; performance decreases with workload scale; no built-in query acceleration

Data architecture

Full Agentic Lakehouse: open Polaris catalog, Iceberg table management, AI Semantic Layer and query engine in one platform

SQL engine only: no catalog, no table lifecycle management, no semantic layer

Governance and security

Built-in enterprise RBAC, row-level filtering and column masking enforced at the catalog layer

Relies entirely on third-party tools for access control, metadata and auditing

AI readiness

Cross-source AI Semantic Layer at GA; MCP server connects any external AI agent; built-in AI Agent

No native AI or semantic context; no agent connectivity out of the box

Ease of use

Self-service analytics with Autonomous Reflections eliminating manual performance work

Requires significant tuning, external tooling and engineering expertise at enterprise scale

Scalability and concurrency

Elastic Engines scale dynamically; Autonomous Reflections pre-compute results from observed patterns

Single coordinator bottleneck at high concurrency; Warp Speed caches raw data blocks but does not pre-compute from query patterns

Cost efficiency

Reduced TCO through Autonomous Reflections, zero-ETL architecture and lower operational overhead

Management and performance effort drive up true TCO; no built-in acceleration increases compute waste

Among the leading Trino competitors, Dremio stands out by delivering a fully integrated Agentic Lakehouse rather than a standalone query engine. For enterprises evaluating Trino alternatives, Dremio offers a commercially supported, fully managed option that eliminates the need to assemble and operate a separate stack for governance, metadata, acceleration and AI readiness.

Autonomous Reflections are the single clearest differentiator: no Trino-based deployment, including Starburst Galaxy, has an equivalent. Starburst Warp Speed caches raw data blocks; it does not pre-compute from query patterns.

The most important difference between Dremio and Trino is Autonomous Reflections, the only fully automated query acceleration system in the market. Dremio analyzes query patterns over a rolling 7-day window and automatically creates, refreshes and retires materialized accelerators. Starburst Warp Speed caches raw data blocks on NVMe SSDs, analogous to Dremio C3 caching, but it does not pre-compute results from observed query patterns. Neither Warp Speed nor any Trino-based solution does this.

Trino is a SQL engine. Every enterprise capability beyond query execution, including governance, metadata management, table lifecycle and performance acceleration, requires separate assembly and ongoing operation. Dremio delivers all of it in a single integrated platform built on Apache Polaris (ASF top-level project, graduated February 2026).

Trino federates queries across sources but provides no semantic modeling layer on top. Dremio’s cross-source AI Semantic Layer is the only one at GA that spans all federated data, making data discoverable and usable by both analysts and AI agents without stitching together separate tools.

Get common questions answered about Dremio Cloud and its AI agent capabilities

Companies often look for a Trino alternative as their analytics environment grows beyond simple queries and federation. Enterprises frequently require a commercially supported solution that can reliably operate at scale, with predictable performance, security and operational stability across thousands of users and workloads.

As requirements expand to include AI workloads, high concurrency and predictable cost control, many organizations seek a data lakehouse solution that delivers built-in acceleration, autonomous optimization and enterprise-grade governance out of the box. These capabilities reduce operational overhead, improve performance consistency and provide a more scalable foundation for modern analytics and AI initiatives.

Trino is fundamentally an SQL engine, and that query-first focus becomes a constraint in modern enterprise lakehouse deployments, especially as teams try to support governed self-service analytics and agentic AI use cases from the same data foundation.

Trino has no built-in semantic layer that consistently packages technical metadata with business meaning for BI users and AI agents. Organizations end up stitching together separate tools to publish trusted, reusable, AI-ready data. Beyond semantics, Trino leaves many enterprise-critical responsibilities outside the core platform:

As concurrency and workload diversity increase, this assemble-and-operate approach makes it harder to sustain predictable performance, keep policies consistent and manage total cost of ownership without continuous tuning and integration effort.

Dremio handles queries as an autonomous, continuously optimized system, not just an execution layer. In our Agentic Lakehouse platform, data query optimization is a core capability. Learning from real query patterns and automatically applying improvements that accelerate future workloads with minimal user intervention. Trino-based deployments depend on manual tuning and surrounding components to sustain performance as scale and concurrency grow.

A key differentiator is Autonomous Reflections, which automatically create and maintain optimized query accelerations based on real usage, eliminating the need for manual aggregations or performance engineering. In addition, Columnar Cloud Cache (C3) intelligently caches frequently queried data in columnar format close to compute, dramatically reducing repeated reads from object storage. Note: Starburst Warp Speed also provides raw block caching on NVMe SSDs, this is analogous to Dremio C3. The difference is that Autonomous Reflections PRE-COMPUTE results; neither Warp Speed nor any Trino-based solution does this.

Yes, Dremio simplifies much of the integration and operational work that comes with deploying Trino in an enterprise. Where Trino deployments require assembling and operating separate components for security, metadata/catalog, governance and performance management, Dremio delivers these capabilities in a single integrated data lakehouse platform.

With Dremio Cloud (AWS), organizations get a fully managed service that requires no infrastructure setup or ongoing maintenance, automatically handling performance optimization, resource management and scaling. For self-managed environments (Azure, GCP, on-premises), Dremio Enterprise runs natively on Kubernetes for fast deployment and elastic scaling using standard cloud-native tooling.

Using Dremio vs Trino can strengthen data governance and security by providing more capabilities natively, instead of depending on multiple external systems. Dremio supports fine-grained, policy-based access controls and centralized administration through the Apache Polaris catalog, so permissions are applied consistently across datasets, users and AI agents.

Dremio’s Iceberg-native catalog centralizes technical, business and AI metadata in one place, enabling governance workflows such as discovery, lineage visibility and consistent semantic definitions. Compared to Trino deployments that require separate catalogs and security integrations, Dremio’s unified approach reduces operational complexity and improves compliance posture.

Dremio is more future-ready because it is built as a governed, high-performance lakehouse layer for both human users (dashboards, self-service analytics) and machine users (AI agents), not just a distributed query engine runtime. The cross-source AI Semantic Layer provides consistent business and technical context to analysts and AI agents alike, ensuring trusted metrics and enabling AI systems to reason over data accurately while respecting governance and security controls.

Dremio includes a native AI Agent for natural-language exploration, automated insight generation and SQL generation directly on governed data. SQL-embedded AI functions (AI_CLASSIFY, AI_GENERATE, AI_COMPLETE) enable teams to work with unstructured and semi-structured data directly in SQL without separate ETL pipelines. The MCP server enables secure integration with external AI agents such as Claude, ChatGPT and Cursor.

Boost efficiency with AI-powered agents, faster coding for engineers, instant insights for analysts.