Nutanix fast tracked data provision to key business verticals by building Data-as-a-Service platform with Dremio

-

45 million+Milli-seconds latency on queries

-

UNIFIED ACCESSto data through a single integrated platform

Dremio’s Agentic Lakehouse delivers open, Iceberg-native analytics with Autonomous Reflections, a cross-source AI Semantic Layer and lower operational complexity — helping enterprises move faster without the cost and constraints of Databricks.

Comparing Dremio vs Databricks? An open architecture delivers greater flexibility, lower cost and faster time-to-value. Dremio’s Iceberg-native Agentic Lakehouse enables analytics and powers AI readiness directly on open data without platform lock-in or data duplication.

Capability

Dremio

Databricks

Query performance

Apache Arrow-native engine with Autonomous Reflections: auto-created, auto-refreshed, auto-retired accelerators

High performance on Delta with Photon; requires manual tuning on Iceberg workloads

Data architecture

Fully open lakehouse engine built on Apache Polaris (ASF top-level project) with full R/W Iceberg REST API

Databricks-centric; Unity Catalog is proprietary managed (not Polaris-based or Iceberg-native)

Governance and security

RBAC at the catalog layer; row-level filtering and column masking in the Dremio engine; credential vending for external engines

Centralized governance through Unity Catalog with platform-dependent access controls

AI readiness

Cross-source AI Semantic Layer at GA; agents see all federated data; MCP server connects any external AI agent

Mosaic AI is a powerful ML platform, but metric views are scoped to Unity only; agents see Unity data only

Ease of use

Intuitive self-service analytics; Autonomous Reflections eliminate manual performance engineering

Requires Spark expertise and ongoing engineering effort for tuning and pipeline management

Scalability and concurrency

Elastic Engines scale dynamically; Autonomous Reflections deliver predictable performance without additional clusters

Strong at scale; concurrency can drive unpredictable costs via usage-based auto-scaling

Cost efficiency

Up to 40% lower TCO through reduced compute, zero-ETL architecture and autonomous optimization

DBU-based pricing, cluster overhead and engineering effort drive up true TCO

For enterprises seeking an alternative to Databricks, Dremio delivers a truly open, self-service lakehouse built for agentic analytics and high-performance SQL workloads without platform lock-in. Our platform combines Autonomous Reflections, a cross-source AI Semantic Layer, open Iceberg-native catalogs and federated data access to enable governed analytics at scale with lower cost and operational complexity.

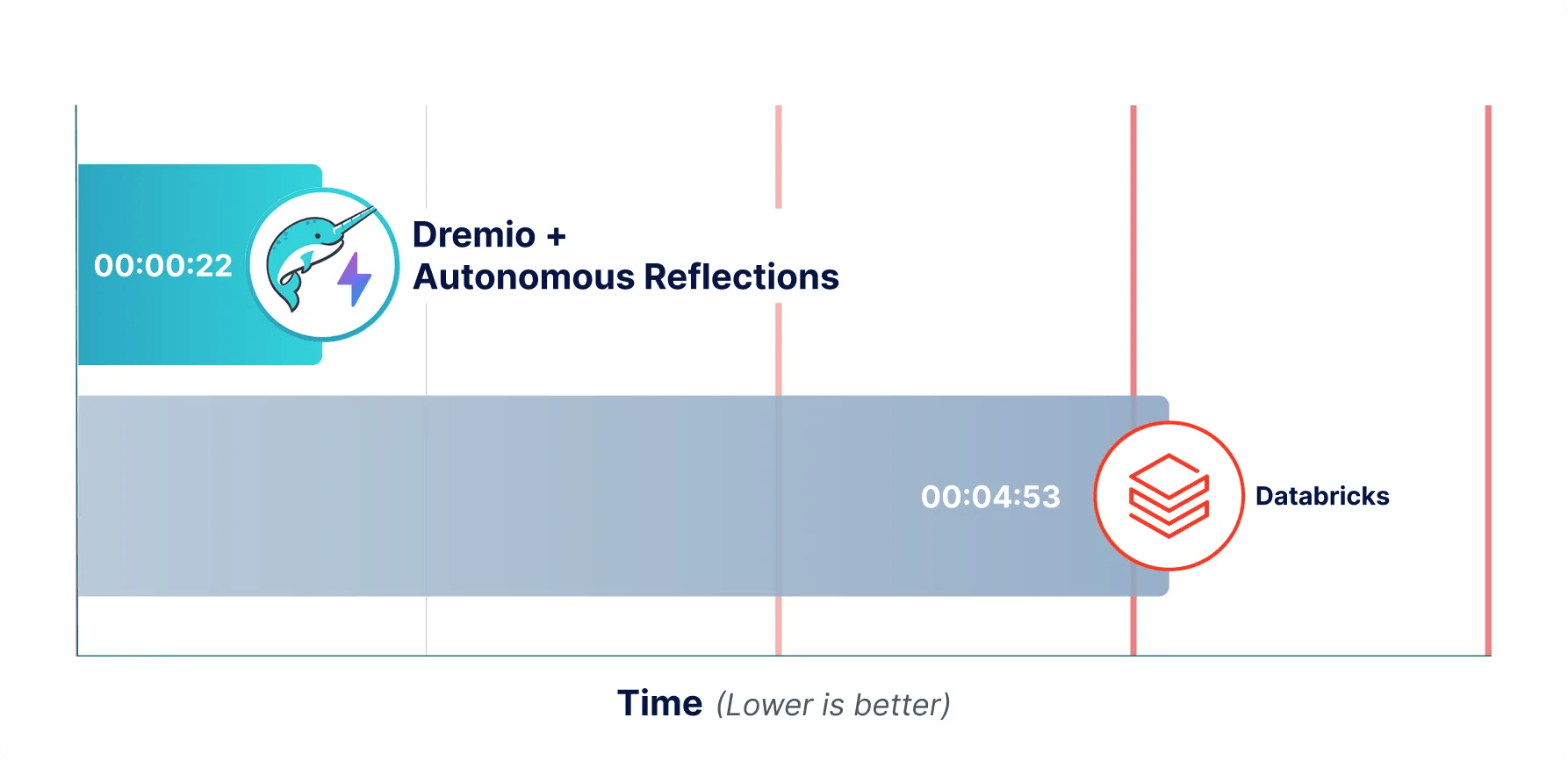

Dremio’s Autonomous Reflections are the only fully automated query acceleration system in the market. Unlike Databricks Predictive Optimization, which handles table maintenance like compaction and vacuum but does not pre-compute query results, Autonomous Reflections learn from real query patterns over a rolling 7-day window and automatically create, refresh and retire materialized accelerators. No DBA required, no manual tuning, no additional cluster provisioning.

Dremio’s built-in catalog IS the open standard. Built on Apache Polaris, an ASF top-level project co-created by Dremio (graduated February 2026), any Iceberg REST engine reads and writes through the same catalog.

Databricks Unity Catalog is proprietary managed: the OSS variant is Apache 2.0 licensed but is not Polaris-based and not Iceberg-native. Customers on Dremio own their data and catalog metadata with no lock-in.

Dremio’s AI Semantic Layer is the only cross-source semantic layer at GA. Databricks metric views are scoped to Unity Catalog only. If a customer’s data lives in Redshift, Postgres, S3 and Snowflake alongside Databricks, their AI agents can only see part of the picture. Dremio’s agents see all of it with business context attached.

Organizations often begin looking for an alternative to Databricks as their analytics and AI requirements evolve and costs increase with scale. While Databricks is commonly adopted for Apache Spark-based data engineering and machine learning, enterprises frequently encounter rising compute, licensing and operational costs — especially as analytical workloads expand to high-concurrency BI and SQL use cases.

In parallel, many organizations are pursuing agentic AI initiatives that require a semantic layer spanning all enterprise data sources, not just Unity Catalog. When agents need governed access to data across Redshift, Postgres, S3 and Snowflake simultaneously, Databricks’ Unity-scoped semantic layer becomes a constraint.

Dremio addresses these challenges by delivering a cross-source AI Semantic Layer, ~31 native federated connectors, Autonomous Reflections for automatic query acceleration and flexible deployment options across cloud and on-premises environments — making it one of the more compelling Databricks competitors for SQL-first, analytics-heavy organizations.

Dremio is the best Databricks competitor because it delivers similar or better analytics and AI capabilities at a significantly lower cost, with less complexity and greater architectural freedom. Many organizations are finding Databricks increasingly expensive as usage scales, while our platform is designed to:

As well, Dremio is also simpler to set up and operate, whereas Databricks environments often require more specialized skills and ongoing operational overhead.

Unlike Databricks, which is tightly coupled to its platform and governance model through Databricks Unity Catalog, Dremio provides an open, flexible alternative with the Dremio Open Catalog, enabling centralized governance across multiple engines, clouds and data platforms without lock-in.

In addition, Dremio supports on-premises, cloud and hybrid deployments, and is purpose-built for the AI era: combining high-performance query acceleration, federated access to all enterprise data and a semantic layer that provides governed business context for agents, making it a simpler, more cost-effective and more open foundation than Databricks for modern analytics and AI.

Dremio offers a more predictable and cost-efficient solution than Databricks, particularly when price-performance is taken into account. Autonomous Reflections serve repeated queries from pre-computed Iceberg tables, reducing active engine time and lowering effective compute costs. Unlike Databricks’ DBU-based pricing tied to clusters and instance sizes — which can escalate quickly as workloads scale — Dremio Cloud uses consumption-based pricing where Elastic Engines scale dynamically based on demand.

Combined with zero-ETL architecture and open standards that avoid proprietary infrastructure costs, this results in a significantly lower and more predictable total cost of ownership when comparing Dremio vs Databricks.

As an agentic lakehouse platform, Dremio accelerates AI and LLM workloads by combining an Open Catalog with an AI Semantic Layer that provides governance, metadata and business context for both users and AI agents. Instead of copying data into a proprietary system, queries are made in place on object storage, allowing models and agents to train, retrieve and reason over the same governed datasets used for analytics.

Dremio also includes AI functions for classification, extraction, enrichment and generation, enabling the transformation of unstructured data into agentic AI insights directly within the platform.

Beyond acting as an AI-ready, big-data processing foundation, our platform provides its own integrated AI agent and connects to external agents through the MCP (Model Context Protocol), empowering multi-agent collaboration across the lakehouse. Using Dremio’s semantic layer, these agents gain:

Combined with autonomous performance, high concurrency and an open Iceberg catalog that avoids lock-in, Dremio delivers a lakehouse platform purpose-built for enterprise-grade agentic AI and LLM workloads.

Yes, your existing Databricks workflows can be integrated with Dremio without re-architecting your lakehouse. Our platform connects directly to Databricks data sources, including Delta Lake tables, and supports integrating Databricks’ Unity Catalog, so data teams can reuse existing data governance models, metadata and table definitions while enabling new analytics and BI use cases on the same data.

Dremio also provides full read-and-write support for Apache Iceberg and implements the Iceberg Catalog REST API, making it easy to operate in open, multi-engine environments. This lets organizations run Dremio alongside Databricks for interactive SQL, BI acceleration and AI-driven analytics while keeping data in open formats and avoiding duplication or vendor lock-in.

Boost efficiency with AI-powered agents, faster coding for engineers, instant insights for analysts.